You’ve trialed two AI PPC tools this year. Performance didn’t move in a way you could attribute to either of them. The problem is not the tools. It’s that every article recommending AI paid advertising tools skips the one step that actually matters: figuring out which functional problem you need to solve before picking anything. I’ve run paid campaigns across BFSI, retail, and e-commerce clients including Axis Bank, Croma, and Burger King. The accounts that got lift from AI tooling were not the ones with the most tools. They were the ones that fixed their measurement layer first.

Your AI Tool Is Only as Smart as the Data You Feed It

Most practitioners evaluate AI PPC tools the same way. They read a vendor case study, note the ROAS lift claimed, and add the tool to an account that is already running. The measurement layer underneath is assumed to be fine.

That assumption is where most tool evaluations break down.

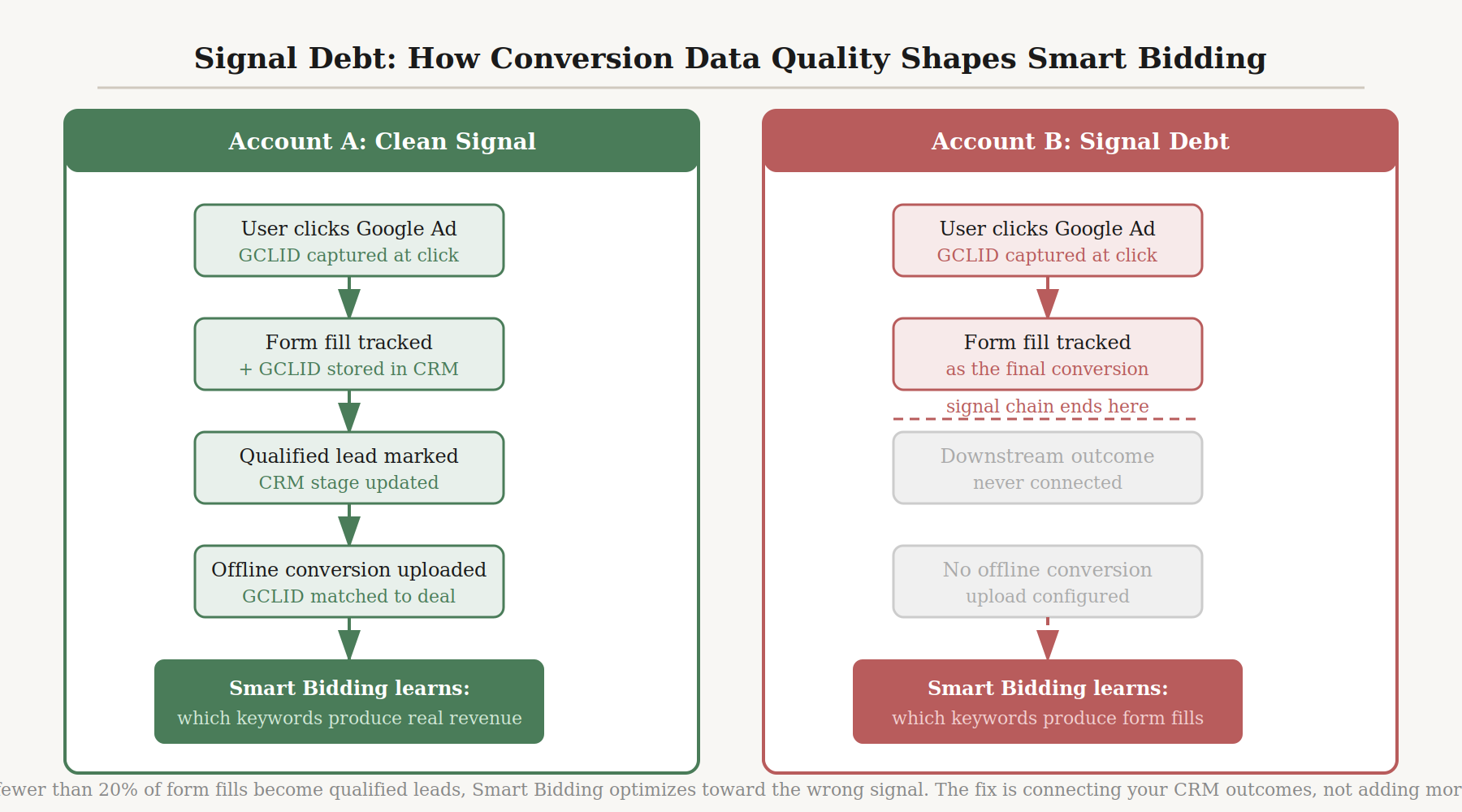

Here is what is actually happening. Smart Bidding and every third-party tool that layers on top of it make decisions based on the conversion signals they can observe. If your conversion events are misconfigured, if your attribution window is shorter than your actual sales cycle, or if you are not passing downstream outcomes back into the platform, the AI is learning from the wrong data. It does not know this. It optimizes toward the signals it has. The result is an algorithm that is working hard in exactly the wrong direction.

This is what I call Signal Debt: the accumulation of poor-quality conversion signals that causes AI bidding systems to learn the wrong patterns over time, producing optimization that feels active but is moving away from your actual business outcomes.

Most AI paid advertising tool lists are solving the wrong problem: the bottleneck in most paid advertising accounts is not automation capacity, it is data quality, and adding AI tools to a broken measurement stack amplifies waste instead of eliminating it.

I saw this directly while running paid campaigns for BFSI clients. One account was tracking form fills as the primary conversion event. On the surface, the data looked healthy: volume was there, conversion rates were visible, Smart Bidding had something to optimize toward. But fewer than 20% of those form fills were converting to qualified leads downstream. The keywords driving the highest form-fill rates were not the same keywords driving the highest quality leads.

Smart Bidding was optimizing hard toward a metric that had almost no relationship to the business outcome we cared about.

We connected CRM data back into the platform via offline conversion import, matching funded account approvals to their original Google click IDs. Smart Bidding’s targeting shifted noticeably within three weeks. Terms that had been suppressed because they showed lower form-fill rates started receiving more budget once the algorithm could see they were producing qualified leads at a much higher rate than the form-fill data suggested. The bid strategy did not change. No new tools were added. The signal changed, and the optimization followed.

According to Google’s own Ads Help documentation, advertisers who use first-party data alongside click IDs for offline measurement see a median 10% increase in conversions compared to those using standard offline conversion imports. The ad attribution benchmarks show the scale of this problem across the industry: multi-touch model adoption remains low even among sophisticated advertisers, which means most accounts are running AI bidding on last-click or same-session attribution that systematically misrepresents where qualified buyers actually come from.

Can AI manage PPC campaigns automatically? Yes. But the quality of that automation is bounded entirely by the quality of the conversion signal feeding it. The ceiling is lower than most vendors will tell you. And raising it costs nothing except the technical work of connecting your CRM.

Before evaluating any AI PPC tool, audit three things. First: which conversion events you are tracking, and whether they predict revenue rather than just activity. Second: whether your attribution window matches your actual sales cycle. If deals take 45 days and your window is 30, you are systematically discounting your highest-intent keywords. Third: whether downstream CRM outcomes are connected to the platform at all. If any of these are broken, fix them first. No AI tool compensates for Signal Debt.

Read next: AI marketing analytics

The Five Categories of AI PPC Tools (And Which One You Actually Need)

Every existing article in this space presents AI PPC tools as a single undifferentiated category. Optmyzr, Adalysis, Madgicx, Revealbot, and Smart Bidding are listed side by side as if they are alternatives to each other. They are not. They solve completely different problems. Evaluating them against each other is like comparing an auditor to a media buyer.

When you do not know which category of tool you need, two things happen. You evaluate tools on the wrong criteria. And you layer tools on top of functions the native platform is already handling, which creates redundancy, adds cost, and makes performance attribution harder.

My practice before any tool evaluation: one question first. What is the single thing that is limiting this account’s performance right now? Map the answer to one of five functional categories. Then evaluate tools within that category only.

I built this habit across years of reviewing tooling decisions at different account scales, from retail brands managing regional campaigns to BFSI clients running Google Ads for financial products to a crypto tax SaaS with 1.5 million users. In every case, the shortlist that held up was never longer than three tools at once. The tools that stayed were filling genuine gaps the native platform did not address. The tools that left were layering on top of what Smart Bidding or Meta’s own automation was already handling adequately.

Here are the five categories:

Category 1: Bid Management covers tools that interact directly with the auction, adjusting bids in real time or setting strategy parameters beyond what platform-native presets allow. Google Smart Bidding is itself a bid management AI. Third-party bid management tools belong in this category only when you have a reason to go beyond native bidding: multi-platform accounts requiring unified bid logic, agencies needing granular per-account control, or campaign complexity that exceeds what Smart Bidding’s preset strategies can accommodate.

Category 2: Account Auditing covers tools that continuously scan campaign structure for hygiene issues, query drift, wasted spend, and missed optimization opportunities. The native platform will not proactively tell you that your search terms have drifted, that your negative keyword coverage has eroded, or that your RSA combinations have collapsed to a single dominant variant. A human analyst can find these issues, but only if they are actively looking. An auditing tool looks continuously.

Category 3: Attribution and Measurement covers tools that improve how you measure and interpret campaign performance. This includes Google Ads Offline Conversion Import (native, free, and the single most underused capability in B2B and long-cycle accounts), Northbeam and Triple Whale for DTC brands managing multi-channel attribution after the deprecation of third-party cookies. This is not optional. It is the prerequisite for every other category to function correctly. Signal Debt lives here.

Category 4: Workflow Automation covers tools that automate repeatable campaign management tasks: budget pacing alerts, label management, cross-account reporting, bid cap enforcement, and performance anomaly detection. This is where agencies and multi-account practitioners find the most time leverage. Google Ads Scripts, Revealbot (for Meta campaigns), and Optmyzr’s rule engine all belong here.

Category 5: Creative Testing and Analysis covers tools that test and analyze ad creative performance, identifying which visual elements, copy styles, and CTAs drive results. This category addresses creative refresh velocity as a bottleneck. Madgicx and AdEspresso belong here. This category is right for you only if you have enough traffic volume for statistically meaningful creative comparisons.

Read next: AI marketing automation platforms

Platform-Native AI Versus Third-Party Tools: The Decision That Most Articles Skip

Most AI PPC tool articles treat platform-native AI and third-party tools as equivalent alternatives. One section covers Smart Bidding and Performance Max. Another covers Optmyzr and Adalysis. The relationship between them is never mapped. The reader is left to figure out whether to use both, one, or neither.

Here is the relationship most articles never state clearly.

Platform-native AI and third-party tools are not competitors. They are hierarchical. Platform-native AI handles what happens at the auction: bid decisions, asset serving, audience signal processing. Third-party tools handle what happens before the auction (account structure, negative keywords, creative quality, campaign hygiene) and after it (reporting, attribution, budget pacing across channels). The value of a third-party tool is in filling gaps the native platform does not address. When a third-party tool tries to replicate what the native platform already does, it adds cost and creates attribution confusion without adding capability.

Google Ads performance data illustrates the scale at which Smart Bidding operates: auction-level bid decisions across every Google property, drawing on device, location, time of day, browser, query semantics, and user behavior history simultaneously. That is genuine capability that no third-party bid management tool replicates from outside the platform. The question is not whether Smart Bidding is good. The question is whether your account’s gap is in the bidding layer, or somewhere else entirely.

For many accounts, the answer is: somewhere else entirely. And that is exactly what the third-party tool market exists to address.

Smart Bidding and Performance Max Are Not the Same Tool

This distinction matters for diagnosing account problems.

Smart Bidding is a bidding strategy. It adjusts bids at auction level in real time based on your conversion goal: Target CPA, Target ROAS, Maximize Conversions, or Maximize Conversion Value. It operates within campaign types that support automated bidding and can be configured per campaign.

Performance Max is a campaign type. It runs across all Google inventory simultaneously: Search, Display, YouTube, Gmail, and Discover. It uses machine learning to serve your assets wherever Google’s signals indicate the highest conversion probability. It has its own bidding logic built in and is not a bidding strategy you choose independently of the campaign structure.

Confusing the two leads to misdiagnosis. If your account has a bid efficiency problem, Smart Bidding settings and conversion data quality are the first places to look. If your account has a cannibalization problem where one campaign type is consuming traffic that should belong to another, that is a structural problem unrelated to how Smart Bidding sets bids. The fixes are different, and no third-party tool solves either one if you have misidentified which problem you have.

When Performance Max Is the Problem, Not the Solution

PMax is frequently misapplied. Running it alongside exact-match Search campaigns without brand exclusions produces cannibalization that practitioners routinely blame on “the AI.” The AI is not the problem. The configuration is.

I saw this pattern across retail campaign work. PMax would run without brand term exclusions. Branded search traffic, which was already converting efficiently in the exact-match Search campaign, started being served through PMax instead. The Search campaign’s impression share dropped. The PMax campaign reported strong ROAS. Total account performance was flat or slightly worse because PMax was claiming credit for conversions it had not generated: users who were already navigating to the brand and would have converted regardless of which campaign served the impression.

The fix is structural. Brand exclusions in PMax prevent it from competing with branded Search campaigns for the same query. Search impression share reporting shows whether cannibalization is happening before it becomes a material budget problem. These are configuration decisions that no third-party tool will make for you because they require someone to understand the account’s campaign architecture first.

If you are evaluating AI tools because Performance Max is underperforming, run this diagnostic before opening a vendor demo. Do you have brand exclusions active in PMax? Are your asset groups using high-quality video and image assets, or placeholder graphics? Are your audience signals built from actual customer data or from interest categories? If any of those are no, fix the configuration. The AI cannot compensate for what you have not given it to work with.

The Tool List, Organized by the Problem It Solves

The AI advertising adoption data reflects how quickly practitioners have moved toward AI-assisted optimization. The tooling market has expanded in proportion, which means the category now contains dozens of options, most of which overlap substantially with what the native platforms already offer.

Practitioners increasingly turn to LLMs to shortlist tools, which adds another layer of risk. WordStream’s accuracy test found that roughly 20% of AI-generated answers about PPC strategy contain factual errors. The evaluation process can mislead you in the same direction as Signal Debt: you end up making fast decisions on bad inputs.

The tools below are organized by category. Each entry covers what the tool actually does, what it requires to deliver lift, who it is genuinely best for, and where it stops working. This list excludes tools that are adequately covered by native Google or Meta features for most account types. It also excludes AI creative generation tools as a primary category. AdCreative.ai, Canva AI, and similar platforms serve a different buying decision from campaign management tooling and belong in a separate evaluation.

A note from advisory work across paid and organic growth clients: the active shortlist that held up at any one time was never longer than three tools. Every addition beyond that created attribution confusion and evaluation overhead that cost more than the marginal lift. The tools that stayed were filling genuine gaps. The tools that were removed were layering on top of what Smart Bidding and Meta’s automation were already handling.

Category 1: Bid Management

Optmyzr is the most consistently cited third-party option among agencies and practitioners managing complex Google Ads accounts. It is not a replacement for Smart Bidding. It is a control layer on top of it: conditional bid rules, custom budget pacing logic, and optimization workflows that go beyond what Smart Bidding’s preset strategies allow. The PPC Investigator feature maps performance changes to their root causes, which is useful when multiple variables are shifting simultaneously and you cannot isolate which change drove the result.

The Optmyzr Mabo case study documents a UK PPC agency cutting account management hours by 56%, running A/B tests 36% faster, and speeding up bid management by 42% after adopting Optmyzr’s Rule Engine and Shopping Campaign tools across their client portfolio.

Pricing starts at $208/month for smaller accounts. The tool rewards configuration investment. It does not deliver lift out of the box. If you are running two campaigns with clean Smart Bidding and sufficient conversion volume, Optmyzr adds cost and complexity without proportionate benefit. Best for agencies managing multiple accounts and advertisers whose campaign complexity genuinely exceeds native tools.

Google’s Smart Bidding documentation recommends at least 30 conversions per month for Target CPA evaluation, and 50 for Target ROAS, before results can be assessed accurately. If your account is below that threshold, no third-party bid management tool resolves the underlying data volume problem.

Skai is an enterprise-level platform covering Google, Meta, Amazon, and retail media in a unified interface. The cross-channel AI optimization and consolidated reporting are the primary value. Pricing is custom and suited to accounts spending hundreds of thousands per month. For single-platform accounts or accounts below $50K in monthly spend, the cost structure does not make sense and the cross-channel value does not materialize.

Category 2: Account Auditing

Adalysis takes a different approach from most AI PPC tools. Its focus is quality assurance and continuous auditing rather than bid automation. Founded by Brad Geddes, whose particular emphasis on account hygiene, search term control, and systematic testing is embedded in how the platform is designed. It detects query drift, negative keyword gaps, RSA combination fatigue, and structural issues that manual review catches inconsistently. Pricing starts at $99/month.

Best for practitioners managing accounts with 20 or more active ad groups, where systematic quality control saves more time than the tool costs. For small accounts with limited keyword volume, the data density is not sufficient for continuous testing to produce statistically meaningful guidance. Adalysis works best when the account gives it enough signal to work with.

Category 3: Attribution and Measurement

Google Ads Offline Conversion Import is not a third-party tool. It is a native Google Ads capability that most B2B, BFSI, and long-cycle SaaS accounts are not using. It imports downstream CRM outcomes back into the platform by matching them to the original Google click ID. When Smart Bidding can see which clicks produced qualified leads, closed deals, or signed accounts rather than just form fills, it reorients toward the terms and audiences that actually drive business results.

As noted above, Google’s own data documents a median 10% increase in conversions for advertisers who connect first-party data with click IDs for offline measurement. It is free. The setup requires your CRM to log the Google click ID at the moment of lead creation, which most CRM setups do not do by default but most CRM administrators can configure in a day with the right documentation. This is the highest-leverage action available to most accounts running AI bidding on long-cycle sales processes.

Northbeam and Triple Whale are post-purchase attribution platforms built primarily for DTC e-commerce brands managing multi-channel measurement after third-party cookie deprecation. If you are running Google, Meta, TikTok, and Pinterest simultaneously and need a single attribution source that does not depend on platform-reported ROAS, these belong in your evaluation. For B2B or single-channel accounts, they solve a problem smaller than their cost and complexity.

Category 4: Workflow Automation

Google Ads Scripts are custom JavaScript automations that run within Google Ads at no additional cost. Budget pacing alerts, bid cap enforcement, performance anomaly detection, label management, negative keyword monitoring, and cross-account reporting are all achievable at zero subscription cost. The ceiling is high. The cost is zero. Best for technically capable practitioners or agencies with access to development resources who want automation without a third-party subscription. Poorly written scripts create real account risk. Start with published scripts from established practitioners (Optmyzr and Adalysis both maintain public script libraries) and review every line before running in production.

Revealbot is built specifically for Meta advertising automation. It runs rule-based automations triggered by real-time performance signals: pausing underperforming creative, shifting budget toward winning ad sets, and alerting on spend pacing issues before they become problems. For DTC brands managing Meta campaigns at volume, where creative rotation decisions need to happen faster than weekly human review allows, it earns its place. For Google Ads practitioners or accounts with modest Meta budgets, it solves a problem smaller than its cost.

Category 5: Creative Testing and Analysis

Madgicx is a Meta Ads management platform with creative analytics that break down performance by component: thumbnails, headlines, CTAs, and visual elements separately. The value is not generating creative. It is understanding which variables within your existing creative are driving performance, which focuses subsequent creative investment on the specific levers that move results. Best for DTC brands where creative testing is the primary growth lever and monthly Meta spend is above $10K. If you are not generating enough creative variants to have meaningful comparison data, Madgicx has no signal to analyze.

AdEspresso simplifies A/B testing for Facebook and Instagram and is better suited to smaller advertisers who want structured creative testing without the complexity of native Meta campaign management or Madgicx’s analytics depth. At higher spend levels and with more creative volume, native Meta Advantage+ creative testing or Madgicx scales better.

Before you shortlist any tool, run five checks on your own account. They take less time than a vendor demo and will tell you more.

- Pull your last 90 days of conversion data and confirm that what you are tracking predicts revenue. If you are tracking form fills but your sales team qualifies fewer than 20% of them, your AI tools are optimizing for the wrong event. Fix the conversion definition before evaluating anything else.

- Check whether your attribution window matches your actual sales cycle. If your average deal takes 45 days and your attribution window is 30, you are systematically underreporting conversions from your highest-intent terms and Smart Bidding is pulling budget away from them.

- Map your account’s single biggest performance bottleneck to one of the five categories above. Bid inefficiency, account hygiene, creative fatigue, measurement gaps, and workflow capacity are five different problems. Name yours specifically before opening a vendor comparison page.

- If you manage Google Ads with a sales cycle longer than 30 days and have not connected CRM outcomes via offline conversion import, do that first. It is free, it is native, and it is the single highest-leverage action available to most B2B and long-cycle accounts running AI bidding on incomplete data.

- Set a 60-day measurement window for any new AI tool before drawing conclusions. Most AI optimization tools need four to six weeks of data to adjust their models to your account. Evaluating performance in week two is evaluating the onboarding period, not the tool.

If you want help auditing your account’s measurement layer before choosing a tool, reach out via shno.co.