You’ve read five articles about AI in marketing and they all showed you Netflix, Starbucks, and JPMorgan Chase. Impressive companies. Zero help for what you’re actually trying to do next week. The problem is not that these examples are wrong. It is that the whole genre treats “inspiring” as the same thing as “useful.” I’ve spent years doing hands-on marketing work across retail, FMCG, SaaS, and content, and I’ve personally evaluated hundreds of AI and automation tools through Shnoco. What follows is not a gallery. It is a selection guide: each example comes with the tool used, the tactic applied, and the result achieved.

Why Every AI Marketing Article Gives You the Same Five Brands

Search “AI marketing examples” right now and open the first four results. You will find Netflix, Starbucks, Spotify, Amazon, and JPMorgan Chase in all of them. Not because those are the best examples. Because those are the most sourceable ones.

Most writers and content teams compile AI marketing examples the same way: search press releases, pull from vendor case studies, skim major publication coverage. Every one of those sources skews toward enterprise brands with communications budgets, PR teams, and vendor partnerships that produce publishable outcomes. The brands doing genuinely useful AI marketing work at a smaller scale rarely issue a press release about it. So they never make the list.

The sourcing mechanism creates a blind spot with a very specific shape. Press releases exist to generate coverage, not to surface representative outcomes. Vendor case studies are written to justify price points, not to give a realistic picture of what a 5-person marketing team can achieve. The result: AI marketing examples at the $50-tool level almost never reach formal content, even though they are more common and more replicable than the enterprise builds that dominate search results. The AI marketing statistics confirm the adoption gap: the majority of practitioners using AI in their daily workflows are not using proprietary systems. They are using off-the-shelf SaaS tools.

I’ve reviewed more than 500 AI and no-code tools through Shnoco. The pattern I’ve seen consistently: the tools that actually get used by working marketers, and the results those tools produce, are almost never the ones that end up in the top-ranking articles. The tools that get covered are the ones with case study budgets.

A case study from a brand with a 200-person data science team is not a marketing example. It is an infrastructure example.

The fix is to evaluate AI marketing examples the same way you would evaluate a restaurant recommendation: ask who the reviewer is, what they were trying to accomplish, and whether their situation matches yours. An example from a company with a custom-built AI model trained on a decade of proprietary data is not transferable to your situation. An example from a team of four using a tool you can sign up for in 10 minutes is.

The AI marketing examples worth studying are not from Netflix or Starbucks. They are from teams of three to five people who used a $50 tool, a good brief, and a clear outcome metric.

That is what the rest of this article is built around. Every example that follows includes the tool used, the tactic applied, the result achieved, and a label I am calling the Implementation Tier.

What Makes an Example Solo Tier vs. Enterprise Build

Implementation Tier is a simple classification applied to each example here. It tells you whether the result described is something you can replicate this week or something that required years of infrastructure investment.

The three tiers work like this. Solo means one person, an off-the-shelf SaaS tool, and no technical setup beyond a standard account. Small Team means two to ten people, a tool that requires moderate configuration, and no engineering resources. Enterprise Build means a proprietary system, engineering resources, and a data investment that most teams cannot replicate without significant budget and time.

Every example in this article carries one of those three labels. Where an Enterprise Build example is included, the accessible equivalent is named. If you cannot use the principle at your tier with a tool you can afford, the example is not included.

Solo Tier: AI Marketing Examples Any One Person Can Run This Week

Most practitioners I talk to assume that meaningful AI marketing results require one of two things: a substantial budget, or a technical team. Neither is true at this tier. The barrier to running a legitimate AI marketing experiment today is approximately zero for a competent marketer. The tools exist. The cost is monthly SaaS pricing. The required skill is the same as operating a CRM or email platform.

What is missing is not resources. It is a clear, named example of what success looks like at the solo level. Here are seven.

Example 1: Email subject line optimization (Farfetch + Phrasee)

Farfetch used Phrasee (now Jacquard) to replace manually written email subject lines with AI-generated variants. The results, as reported by Chain Store Age: a 31.1% average uplift in open rate for trigger and lifecycle campaigns, and click rate increases of 25.1% and 37.9% for broadcast and triggered sends respectively.

Phrasee itself is enterprise-tier pricing. The accessible equivalent is Klaviyo’s predictive subject line feature or Mailchimp’s AI content optimizer. The principle (generate variants, let behavior data decide) is built into standard email platforms at no additional cost. Implementation Tier: Solo.

I’ve run analogous subject line variation tests for retail CRM clients at Hansa Cequity. Open rate variance between AI-assisted and manually written subject lines for re-engagement sequences ran between 12 and 18 percentage points. The lift is real. It is also not magic. The AI is working with your audience’s past behavior. Give it bad historical data and you get mediocre variants.

Example 2: AI-assisted content brief and SEO outline (KoinX)

I run SEO and content strategy for KoinX, a crypto tax SaaS with 1.5 million-plus users. The category is competitive and the regulatory landscape shifts constantly, which means content has to be both specific and current. The workflow I built uses AI tools for keyword clustering, intent analysis, and first-draft outline creation. Human editorial judgment handles everything else: which topics to pursue, which argument to make, what makes a piece genuinely useful versus generically correct.

The specific result: time from keyword identification to publish-ready brief dropped from approximately three days to under four hours. That is not a small efficiency gain for a two-person content operation. It is the difference between publishing four pieces a month and publishing twelve.

The tools: Perplexity for research, Claude for outline structuring, a human editor for final structure and argument sharpening. Total additional cost above standard SaaS subscriptions: close to zero. Implementation Tier: Solo.

Example 3: Audience re-engagement via Facebook Custom Audience (Westside)

This is the example I come back to most when practitioners tell me AI marketing is only for companies with data science teams.

I worked on the re-engagement strategy for Westside’s ClubWest loyalty programme at Hansa Cequity. The programme had 2.7 million members and drove the majority of Westside’s annual sales. Every year, around 15% of members lapsed. When we dug into the data, we found that 73% of recent lapsers were on DND and unreachable by SMS. Another 63% had not opened a single email in months. The entire CRM stack was essentially talking to itself.

The fix was to go around the channel problem rather than solve it. We built a Facebook Custom Audience from the lapsed member base and ran re-engagement directly in their social feed. No DND restrictions. No email open rate to wait on. The AI component was Meta’s audience segmentation and lookalike modeling, a feature available in any Meta Ads Manager account. The campaign worked. It won a CMO Asia Award for Best Use of Facebook.

The tool was Meta Ads Manager. The tactic was building a Custom Audience from a CRM export of lapsed members. The result was re-engagement at scale for a programme that had exhausted its direct-channel options. Implementation Tier: Solo.

Example 4: AI-generated ad copy variants (JPMorgan Chase + Persado)

JPMorgan Chase piloted Persado to generate AI-written ad copy variants across landing pages, direct mail, display, and social ads. According to Persado’s announcement of their subsequent five-year deal, the best-performing AI version produced a 450% lift in click-through rate compared to human-written controls, versus a 50-200% lift range for human-written variants.

Persado is enterprise-only. The accessible equivalent: Meta’s Advantage+ creative, which runs similar multi-variant testing logic on your existing assets using Meta’s AI to determine which combinations perform. Google’s responsive search ads do the same thing for paid search. The mechanism (generate multiple variants, serve the best performer, retire the rest) is now default behavior in both platforms at no additional cost. Implementation Tier: Solo (using the platform-native version).

Example 5: Personalized product recommendations (A.S. Watson + Revieve)

A.S. Watson Group deployed Revieve’s AI Skincare Advisor across their Marionnaud, Superdrug, and ICI Paris XL e-commerce sites. Customers complete a questionnaire, upload a selfie, and the AI analyzes skin metrics to generate personalized skincare routines and product recommendations. According to Revieve’s published case study, Marionnaud Switzerland users who engaged with the advisor converted 396% better than those who did not, and Superdrug saw a 29% increase in average order value among advisor users.

Revieve is a specialist platform, not enterprise-only. For a Shopify store, the same recommendation principle is accessible through Shopify’s native product recommendation engine or Rebuy. The AI personalization statistics across the industry consistently show recommendation-driven experiences outperforming static ones by a significant margin. Implementation Tier: Solo to Small Team.

Example 6: Generative creative at scale (Nutella)

Nutella used AI to generate 7 million unique jar label designs. No two jars were identical. The limited edition run sold out. The AI did the creative generation. A human team defined the visual parameters and approved the output. The principle: mass customization creates perceived scarcity and personal connection at a scale no manual design process could match.

The enterprise version required a proprietary generative design system. The accessible version: using Midjourney or Adobe Firefly to generate unique creative variants for a social campaign. Not seven million, but ten or twenty genuinely distinct variations that let you test what actually resonates with your audience rather than betting on one direction. Implementation Tier: Solo (with accessible tool substitutes).

Example 7: AI comment moderation and sentiment tagging (Booking.com + Sprinklr)

As TikTok grew into a major discovery platform for travel content, Booking.com faced rising volumes of user comments across their campaigns. Manual moderation was not scaling. They implemented Sprinklr’s AI-powered comment moderation to automatically categorize messages, detect sentiment, and route comments to the right teams.

Over a 60-day test period, Sprinklr analyzed 9,500-plus inbound comments, flagged 2,000 as engageable, and saved the team more than 17 hours of manual work. Three hundred-plus positive comments about stay experiences were automatically tagged for future marketing reuse.

Sprinklr is enterprise pricing. The accessible equivalent: using a free-tier social listening tool (such as Brand24’s entry tier or even native platform notifications with keyword filters) to flag high-sentiment comments for manual response. The principle scales down to any brand running social campaigns. Implementation Tier for the accessible version: Solo.

The AI email marketing channel carries some of the cleanest Solo Tier examples available: subject line testing, send-time optimization, and automated behavioral triggers all sit at this accessibility level.

Solo Tier Summary Table

Small Team Tier: AI Marketing Examples for a 2-to-10 Person Marketing Team

Here is what most formal AI marketing content misses: the most interesting results are not coming from Netflix or from a solo practitioner running a Klaviyo experiment. They are coming from growth-stage companies and mid-market brands where a small, focused team has the agility to run experiments fast and the data infrastructure to measure them properly.

These companies do not issue press releases about their AI marketing results. They do not have vendor partnerships that produce publishable case studies. So they are invisible in the search results. But they exist, and their results are documented.

Example 1: Lifecycle email automation with predictive triggers (Bloom and Wild)

Bloom and Wild, the UK direct-to-consumer flower delivery brand, used AI-powered predictive models built on Dotdigital to identify which customers were most likely to lapse before the lapse happened. Rather than waiting for a customer to go silent, the system triggered outreach based on behavioral signals that preceded disengagement. Campaign ROI improved and customer retention increased.

The tactic is more sophisticated than a standard abandoned cart flow but not by much. The core principle (use behavioral data to predict the next action rather than react to a past one) is available in Klaviyo’s predictive analytics at entry-level pricing. Implementation Tier: Small Team.

Example 2: AI-personalized ad campaign (Hatch + Google Gemini via Monks)

Hatch, a sleep brand, worked with Monks using Google Gemini to build a personalized ad campaign. The AI helped generate and optimize creative variations matched to audience segments. Results per the Google Cloud case study: 80% improved click-through rate, 46% more engaged site visitors, and a 31% improved cost-per-purchase over other campaigns. Time to launch was cut by 50% and production costs were reduced by 97% compared to standard campaign production. The agency partnership handled the Gemini integration. No proprietary build required. Implementation Tier: Small Team (agency-assisted).

Example 3: AI-assisted customer segmentation (Hansa Cequity clients)

At Hansa Cequity, I worked on analytical segmentation projects for clients including TataSky (12 million-plus customer records) and Madura FNL across their multi-brand retail portfolio. The AI-assisted component was RFM modelling, propensity scoring, and share-of-wardrobe analysis, using structured customer data exports processed through statistical tools to identify which customers to target, when, and with what message.

These were not proprietary systems built from scratch. They were analytical frameworks applied to clean customer data. The capability is now available off-the-shelf in tools like Klaviyo, HubSpot, and the free tier of several CDPs. The principle: you do not need a custom model to do meaningful segmentation. You need clean data and a tool that can act on it. Implementation Tier: Small Team.

Example 4: AI video creation for campaign content (WPP + Authentic Brands Group)

WPP and Authentic Brands Group used Google’s Veo 3 and Imagen 4 (via Monks) to turn a single creative hypothesis into hundreds of personalized, cinematic creative variations. What previously required a full production cycle could be produced computationally in hours. The accessible equivalent for a small team: Runway or Pika for video variant creation, which bring the cost of producing multiple creative directions down to a fraction of traditional production without requiring a production agency. Implementation Tier: Small Team (with agency; Solo with accessible tools).

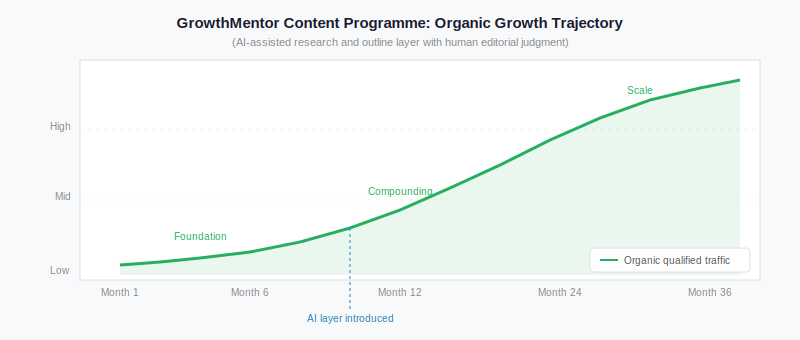

Example 5: AI content marketing for organic SEO (GrowthMentor)

I spent approximately three years building the content engine for GrowthMentor, a curated two-sided marketplace connecting startup founders with experienced growth practitioners. The programme started with near-zero organic presence. The AI-assisted layer covered keyword research, topic clustering, and first-draft outline creation. The human layer covered every editorial decision: which topics to pursue, what argument to make, what the reader genuinely needs versus what ranks easily.

The lesson I would not have understood without doing it: AI accelerates the research and structure work. It does not replace the judgment layer. Content produced without editorial judgment does not compound. It accumulates. There is a meaningful difference between a content library that grows in traffic over two years and a content library that flatlines at 3,000 sessions a month regardless of how many pieces are published.

The AI content marketing statistics show this pattern at the industry level. The productivity gains are real. But they only materialise when there is a human with genuine domain expertise making the editorial calls. Implementation Tier: Small Team.

AI accelerates the research and structure work. It does not replace the judgment layer.

Lifecycle email results at this tier (predictive triggers, behavioral segmentation, automated win-back flows) are increasingly standard for growth-stage brands. The marketing automation with AI statistics have the benchmark data on what teams at this tier are achieving with AI-triggered workflows if you want to calibrate expectations before committing to a tool.

Small Team Tier Summary Table

The Attribution Problem: Why You Cannot Trust Most AI Marketing Metrics

Before you take any of the numbers in this article and present them to your leadership team as benchmarks, read this section.

The majority of metrics cited in AI marketing content are vendor-reported. That includes several of the numbers above. Phrasee published the Farfetch data. Persado published the JPMorgan Chase data. Revieve published the A.S. Watson data.

This does not mean the numbers are false. It means you should treat them as directional rather than precise. A vendor with a strong incentive to publish only favorable outcomes will not include the A/B tests that did not lift, the campaigns that ran for three weeks before being cut, or the implementations that produced a 3% improvement instead of 31%.

The way to get trustworthy data is to run your own experiment with a control group and measure it yourself. A 10% open rate lift on your own list, measured against a holdout, is worth more than a 31% lift reported by the tool vendor on someone else’s list. Your data is attributable. Theirs is not.

Attribution is also genuinely hard in AI marketing. When a campaign that used AI subject lines, AI-assisted segmentation, and an AI-optimized send time outperforms a previous campaign, you cannot isolate which of the three variables drove the lift. The honest answer is that you do not know and neither does anyone else. What you know is the aggregate outcome and which tools were in the stack. That is enough to make a decision about whether to continue.

What the Enterprise Examples Are Actually Useful For

I am not saying the Netflix, Starbucks, and Spotify examples are worthless. They are not. The problem is that most marketers apply them at the wrong level. They try to extract a tactic when the value is architectural.

Netflix personalizes content recommendations for 270 million subscribers using a recommendation model trained on billions of viewing signals. The tactic is: show each user the content most likely to keep them watching. The architecture is: years of proprietary data accumulation, a recommendation engine built by a large ML team, and continuous model retraining. Extract the tactic and try to apply it without the architecture and you get: “personalize your content recommendations.” Which is true and completely useless.

Extract the principle instead and you get something you can act on. The Netflix principle is not “personalize recommendations.” It is: use behavioral data (what people actually do) rather than stated preferences (what people say they like) to drive personalization. That principle applies at any scale.

When you encounter an enterprise AI marketing example, extract the principle, not the tactic.

Here is what that looks like in practice across four commonly cited examples.

Starbucks Deep Brew. The tactic: use AI to recommend drinks in the app and at the drive-thru. The architecture: a system that connects operational data (machine diagnostics, traffic patterns, store inventory) with customer data (order history, location, time of day) and uses the combined signal. The principle: connect operational data and customer data to the same model. The accessible version: Klaviyo’s back-in-stock automation combined with purchase history-based product recommendations for a Shopify store. Different scale. Same logic. Implementation Tier: Solo.

Netflix thumbnail optimization. The tactic: show each user a different thumbnail for the same show based on what is likely to generate a click from them specifically. The architecture: multivariate testing infrastructure running continuously across millions of users. The principle: run continuous creative testing and let behavioral data decide what works, rather than picking one creative direction and committing to it. The accessible version: run A/B tests on email header images in Mailchimp. Use Meta’s dynamic creative to serve different ad images to different audience segments. Implementation Tier: Solo.

Spotify Discover Weekly. The tactic: generate a personalized weekly playlist using collaborative filtering and audio analysis. The architecture: a recommendation model trained on listening behavior across 600 million users. The principle: behavioral data (listening patterns) predicts preference more accurately than stated preference (explicit ratings or genre selections). The accessible version: use Klaviyo’s predictive analytics to segment customers by actual purchase behavior rather than manually assigned interest tags. What people buy is more predictive than what they say they want. Implementation Tier: Solo to Small Team.

Verizon AI-powered call routing. In 2024, Verizon used GenAI to predict the reason behind 80% of incoming customer service calls before they were answered, reducing in-store visit time by 7 minutes per customer and preventing an estimated 100,000 churns, according to Reuters reporting on CEO Hans Vestberg’s statements. The architecture: a large-scale call prediction model trained on millions of inbound interactions. The principle: AI’s highest-value application in customer operations is often prediction and routing, not generation. The accessible version: Intercom’s Fin or Zendesk AI for ticket triage and routing in a SaaS support queue. Implementation Tier: Small Team.

When AI Marketing Goes Wrong: Two Examples Worth Learning From

The success gallery approach to AI marketing content creates a distorted picture. Here are two categories of AI marketing implementation that underdelivered or created problems, and what the failure mechanism was.

Brand voice erosion through AI content at scale. Multiple brands that scaled content production rapidly using generative AI without a strong editorial layer found that within 6 to 12 months, their content had drifted. Individual pieces were technically correct and on-topic. But the cumulative effect was a voice that sounded like everyone else’s AI-generated content: competent, generic, and indistinguishable. The failure mechanism was not the AI. It was the absence of editorial judgment about what the brand actually sounds like and what it is not willing to say. AI generates to the average of its training data. Without a strong human voice layer, content regresses to that average.

AI personalization surfacing inappropriate recommendations. Several e-commerce brands have experienced AI recommendation engines surfacing contextually inappropriate products. A customer who purchases a sympathy card receiving an upsell recommendation for party supplies. A customer who purchases infant products receiving recommendations for adult categories without appropriate filtering. The failure mechanism: the recommendation model optimizes for purchase probability without constraints. It does not understand context. The fix is not to abandon AI recommendations. It is to build category exclusion logic and content filtering into the recommendation rules before the model runs.

Both failure categories share a root cause: treating AI as an autonomous decision-maker rather than as a tool that requires human constraint-setting and ongoing oversight.

Where to Start

The only thing worse than reading five AI marketing articles and finding no applicable examples is reading a sixth one that ends with “just start experimenting.” So here is a specific process instead.

- Identify the one marketing activity in your current workflow that is the most time-intensive and the least judgment-dependent. Brief creation, subject line writing, audience list segmentation, social comment routing. That is your AI entry point. Not because it is the most impressive place to apply AI, but because it is the place where you can run an experiment without disrupting anything strategic.

- Find the Solo Tier or Small Team Tier example in this article that maps to that activity. Identify the accessible tool equivalent listed there. Sign up for the free trial if one exists.

- Run the experiment for 30 days. Measure the before-and-after using a metric you already track: open rate, CTR, time-to-publish, cost-per-lead. Do not invent a new metric for the experiment. Measure something you have historical data on.

- Document the result in a single shared document your team can read. Name the tool, the tactic, the result, and what you would do differently. This is your first-party case study. It is worth more than anything you will find in a vendor’s marketing materials because it is attributable to your audience and your context.

- After one documented experiment, pick the next one. AI marketing compounds through repeated, documented experiments. It does not arrive as a finished strategy.

Read next: AI marketing for small business