You subscribed to an AI writing tool. You ran content through it. You spent the next two hours editing out everything that sounded wrong. The efficiency gain never arrived. The problem is not the tool. The problem is that every roundup told you which tool was best without explaining what each one is actually for. I have spent three years building the content engine at GrowthMentor and personally evaluated 500+ tools building Shnoco. The right question is not which AI content marketing tool is best. It is which tool belongs at which stage of your workflow.

Most Tool Roundups Answer the Wrong Question

Most content marketers approach this the same way. They read a roundup, pick a tool that sounds credible and falls within budget, sign up, and start generating. Three months later, the editing overhead has not shrunk. The content still needs significant work before it is publishable. The efficiency gain is somewhere in the fine print.

That is not a bad tool problem. That is a category confusion problem.

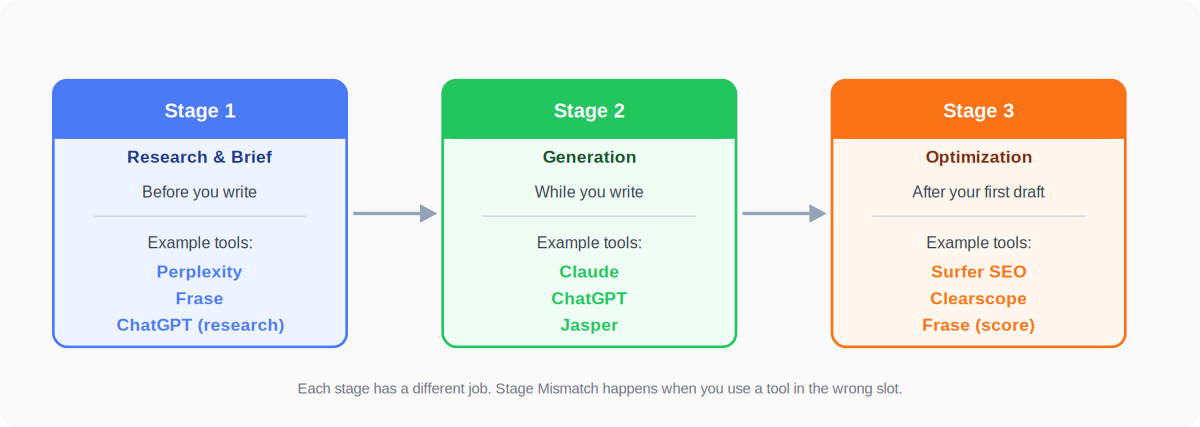

AI content marketing tools fall into three functionally distinct categories. Research and brief tools help you understand what is worth writing, for whom, and where the gap is before you write a word. Generation tools produce the actual draft. Optimization tools score and refine the draft so it has a realistic chance of ranking. Each category has a different job. Each has a different failure mode when used incorrectly.

Most AI content marketing tool roundups answer the wrong question: they tell you which tool is best, when the only question worth answering is which tool belongs where in your workflow.

When you deploy a generation tool where you needed a brief tool first, you get exactly what everyone complains about: output that sounds competent but has no differentiation, no specific point of view, nothing that separates it from every other piece generated on the same keyword. The tool is not broken. It is in the wrong slot.

Stage Mismatch: using the right type of software in the wrong part of your content process. Fix the slot, and the tool starts working.

The Three Categories, Defined

Before picking any specific product, understand what each category is actually for.

Most of the tools you have heard of are in Stage 2. That is where the marketing budget goes. That is rarely where the bottleneck is.

Stage One Tools: The Brief Determines the Draft

The most common AI content workflow: open a generation tool, paste in a keyword, generate a draft, spend the next hour editing out everything that sounds generic or wrong. It is the fastest path from zero to words on screen.

It is also exactly why the output sounds like every other piece of AI content on that topic.

A generation model can only produce output as differentiated as the input it receives. This is GIGO applied to content. A vague prompt produces a generic draft because the model has no information about what makes your article different, what the reader actually wants to know, or where the existing content on this keyword is weakest. The model fills that gap with whatever is most statistically likely given the input. The result is competent, thorough, and indistinguishable from the competition.

At GrowthMentor, brief quality was the single highest-leverage variable in the content workflow. Articles with a documented brief that included competitive gap analysis and specific reader intent mapping required roughly half the editing time of articles that started from a keyword alone. The generation model did not change between those two sets of content. The input did. That difference in editing time compounded over months into a meaningful gap in output volume and ranking performance.

The fix is not a better generation tool. It is a better brief.

At Stage One, the tool job is research and structure: understand what is ranking for the target keyword, why it is ranking, what the reader actually wants to know, and where there is a gap your article can fill. The output of Stage One is not a keyword. It is a brief with a point of view.

The Brief Tools Worth Using (and One That Is Overrated)

Perplexity does real-time research with source citations. For fact-checking, understanding the current information landscape around a topic, and surfacing recent data before you write, it is faster than any manual research workflow I have used. Best for: any article that references recent statistics, events, or rapidly-changing subject matter.

Frase pulls SERP data for your target keyword and builds a brief showing what the top-ranking pages cover, at what depth, and where the gaps are. The brief creation feature alone justifies the entry-level price for teams publishing more than four articles a month. Best for: SEO-focused content where competitive gap analysis needs to be fast and systematic. For deeper guidance on how brief quality connects to your broader planning, the AI content strategy guide covers how to structure your topic calendar before opening a generation tool.

ChatGPT with web browsing enabled is the most flexible Stage One tool for iterative research. The conversational interface means you can probe, follow a thread, and build a nuanced brief through dialogue. Best for: solo marketers who think through problems in conversation rather than structured templates.

The one that is overrated at Stage One: Jasper. Jasper is a generation tool with brief and research features added. It is worth using at Stage Two. At Stage One, its brief quality does not match a purpose-built tool like Frase, and the cost difference over a standalone research tool is hard to justify for this specific job.

Stage Two Tools: Generation Is Not a Commodity

The assumption behind most “which AI writing tool should I use” searches: generation tools are interchangeable. They all use large language models. They all produce text. The differences are cosmetic, pricing-driven, or determined by whichever one has the longest feature list in the comparison table.

This is wrong in a way that costs real time and money.

Generation tools differ on three dimensions that actually matter for content marketers: how much context they can process in a single session, how well they handle long-form nuanced topics versus short-form copy, and what workflow integration they offer for teams that need brand voice consistency across multiple writers. These three dimensions produce genuinely different tool choices depending on your situation.

I run SEO and content strategy for KoinX, a crypto tax SaaS with 1.5 million users. The content challenge there is specific: a crowded category, a regulatory landscape that changes constantly, and a reader sophisticated enough to notice when a technical detail is wrong. That context shaped every tool decision in the content stack.

For long-form articles on nuanced regulatory topics, Claude was the right choice. The 200K token context window meant passing in a full brief, reference documents, and a style guide in a single session and getting coherent output back. The prose quality on technical content is the strongest of any generation tool I have tested. For research iteration, rapid outline drafting, and shorter-format pieces, ChatGPT was the right choice because of its flexibility and conversational interface. For a team environment where multiple writers needed to produce content in the same voice, Jasper would have been the right choice because of its Brand Voice training. The same content operation used all three tools. Not because the stack was inefficient, but because each tool was doing the job it was built for.

This distinction is worth understanding at a deeper level before you decide. How AI content generation actually works covers the mechanics of what each model type does and where differences in output quality actually come from.

The Role-Based Selector: Which Tool for Your Situation

Once the generation workflow is running, the next question is usually output volume. Scaling content production with AI covers the process layer that sits around these tools and how to get consistent throughput without you acting as the manual integration point between platforms.

Stage Three Tools: Where Rankings Are Actually Won

Most AI content workflows end at publishing. Generate a draft, run a light edit, add the keyword a few times, publish. The logic: the content is good, the AI helped produce it efficiently, volume will do the work.

This is the logic behind most of the “AI content does not rank” complaints in practitioner communities.

The pattern practitioners call content decay correlates directly with content that passes a keyword check but fails on topical depth and expertise signals.

Google evaluates more than keyword presence. It evaluates topical depth: does this article cover the subject the way a genuine expert would, or does it skim the surface? It evaluates expertise signals: is there something in this article that required real knowledge to write, or could anyone have produced it after 20 minutes of searching? Optimization tools provide the map for both. They analyze what the top-ranking pages for your target keyword actually contain, which related terms they cover, and how deep they go on each sub-topic. Without that map, you are guessing.

According to Content Marketing Institute’s B2B Content Marketing Benchmarks research, nearly three-quarters of B2B marketers use generative AI, but 61% say their organization lacks guidelines for its use. That gap between adoption and workflow structure is exactly where ranking performance breaks down. The generation step is being handled. The process around it, including the optimization step that determines whether the output is actually rankable, is being skipped.

The AI content marketing adoption and ROI data page has the broader picture on where teams are seeing returns from AI content and where they are not. The pattern holds: adoption is near-universal, performance gains are concentrated in the teams running a complete workflow.

Adding an optimization step before publishing is the highest-ROI change most content teams can make to their AI workflow today.

Surfer vs. Clearscope vs. Frase: The Honest Comparison

Surfer SEO provides a real-time content score as you draft, with NLP-driven term suggestions showing which topics to cover and at what depth. Its data layer is the strongest of the three: it analyzes more SERP signals and produces more granular recommendations than either alternative. Best for: content teams with an existing SEO workflow who want the deepest optimization data available. Honest limitation: the interface has a learning curve, and for teams new to optimization scoring, the recommendation volume can be overwhelming before you know which signals to prioritize. For a more detailed breakdown of how Surfer compares to other AI SEO tools on data sources and pricing tiers, the AI SEO tools comparison covers that specifically.

Clearscope has a cleaner interface and more consistent grading. For a content team where writers are not SEO specialists, the accessibility of Clearscope’s scoring makes it the faster tool to adopt and use consistently. The grading is clear and actionable without requiring SEO expertise to interpret. Best for: teams newer to optimization scoring, or teams where the editor is the SEO-aware person and the writers are not. Honest limitation: less granular data than Surfer; the pricing is harder to justify for solo practitioners.

Frase is the most accessible entry point. It combines brief creation at Stage One and optimization scoring at Stage Three in one platform, which reduces the number of subscriptions needed and the friction of moving between tools. For a solo marketer or small team that needs both brief quality and optimization without a large tool budget, Frase is the most practical starting point. Honest limitation: the AI-generated copy Frase produces for the drafting step is not as strong as Claude or ChatGPT; use it for brief building and optimization scoring, not for generation.

Here is the three-step audit to run on your current stack before subscribing to anything new.

- List every AI tool you currently pay for and assign each one to its actual workflow stage: Stage One (research/brief), Stage Two (generation), or Stage Three (optimization). If you have two or three generation tools and zero optimization tools, you have found the gap.

- Find the Stage Mismatch. Identify the one workflow stage where you are either unequipped (no tool doing that job) or misaligned (using a tool in the wrong slot). For most content teams this shows up in one of two places: brief quality at Stage One, or optimization at Stage Three.

- Before buying anything new, test the fix within your existing tools. If brief quality is the problem, build one structured brief using Perplexity and a simple template before generating your next article. If optimization is the problem, run your last three published articles through the free tier of Frase and check the content score. The data will confirm whether the gap is real.

If you want help auditing your current AI content stack or building the workflow from scratch, reach out at shankar@shno.co.