You have read the lists. They all say the same thing: ChatGPT, Jasper, Surfer, Canva, and whatever got a good affiliate deal this quarter. The problem is not the list. It is that every list answers the wrong question. They tell you what tools exist. None tell you which ones survive contact with an actual workflow. I have evaluated over 500 tools building Shnoco and run AI-assisted content strategy for SaaS clients for three years. Most AI marketing tools do not compound. A few do. The difference is not features. It is whether the tool removes a bottleneck you actually have.

Every AI Marketing Tool List Is Organized the Wrong Way

Go to any major “best AI marketing tools” roundup. HubSpot, G2, Sprout Social, take your pick. They all follow the same structure. Content creation tools in one column. SEO tools in another. Social media tools in a third. You find the column that matches your job title, scan the descriptions, pick the tool with the best copy or the logo you have seen before, and close the tab feeling like you did research.

That structure is designed around how tool vendors want you to think about their products. It is not designed around how your week actually works.

Most AI marketing tool lists are organized around features. The ones that actually change how you work are organized around workflow bottlenecks, and for most marketers, those bottlenecks number three or fewer.

The mechanism behind why feature-category browsing fails is specific. When you browse by category, you end up evaluating tools against a general job description rather than against a concrete daily friction point. A content marketer who already produces content does not need ten content tools. They need the one tool that removes the part of their workflow that costs the most time per unit of output. Feature-category lists cannot identify what that part is, because those lists are built around what the tools do, not around what your day looks like.

The Gartner 2025 Marketing Technology Survey found that only 49% of martech tools are actively used across the organizations surveyed. That is not a tool quality problem. It is a selection method problem. The tools that don’t get used are almost always tools selected by category browsing rather than by specific friction. For context on how far this number has fallen: Gartner recorded just 33% utilization in 2023, down from 58% in 2020. The direction is consistent regardless of the precise figure: marketers keep buying tools and keep not using them.

According to the martech adoption data I track for Shnoco, the pattern holds at every scale: from solo practitioners to mid-size teams, the tools abandoned fastest are the ones added to cover a category rather than to remove a named friction point.

From three years of personally evaluating 500+ tools across the no-code and marketing categories for Shnoco: the tools with the highest abandonment rate were almost always tools whose function was already covered by something the user had. The tools that stuck were the ones that solved a specific, named daily friction point, regardless of which category they officially belonged to.

Before you open any tool comparison page, write down the three things in your marketing workflow that eat the most time per week, produce the most inconsistent output, or require manual effort that should not need a human. That is your shopping list. Not the category directory.

The Bottleneck Stack: How to Build a Tool List That Actually Holds

When AI tools started proliferating in 2022 and 2023, the default response was to subscribe broadly and figure out the use cases later. Try everything. Keep what sticks. It sounded pragmatic. Most marketers I know ended up with what the community accurately calls a “tool graveyard”: active subscriptions for platforms they haven’t logged into in 60 days, three tools doing roughly the same thing, and a monthly SaaS bill that looks nothing like the value they are getting.

The broad-subscription approach has a cost beyond the obvious one. Tool evaluation is itself a workflow tax. Every tool you trial requires setup time, an onboarding sequence, and the mental overhead of learning a new interface. That time comes from somewhere. For a solo practitioner or a lean team, the opportunity cost of evaluating tools that never make it into the daily workflow is not trivial.

More importantly: when you subscribe first and evaluate second, you are making your decision inside the vendor’s demo environment. Demo environments are designed to make tools feel powerful. Your actual workflow is not a demo environment. A tool that impresses you in a 14-day trial, with clean example data and a fresh workspace, often feels awkward and friction-heavy when inserted into how you actually work on a Tuesday afternoon.

At Shnoco, the evaluation model was deliberately inverted. Identify the specific workflow friction first. Ask: what task in my current process takes the most manual time with the least predictable output? Then test tools specifically against that friction, with real work, not example prompts. Of the 500+ tools evaluated across three years, fewer than 15% made it into a “worth paying for” recommendation. The rest were technically functional. They solved problems the target reader either did not have or did not have at a level of pain that justified a monthly subscription.

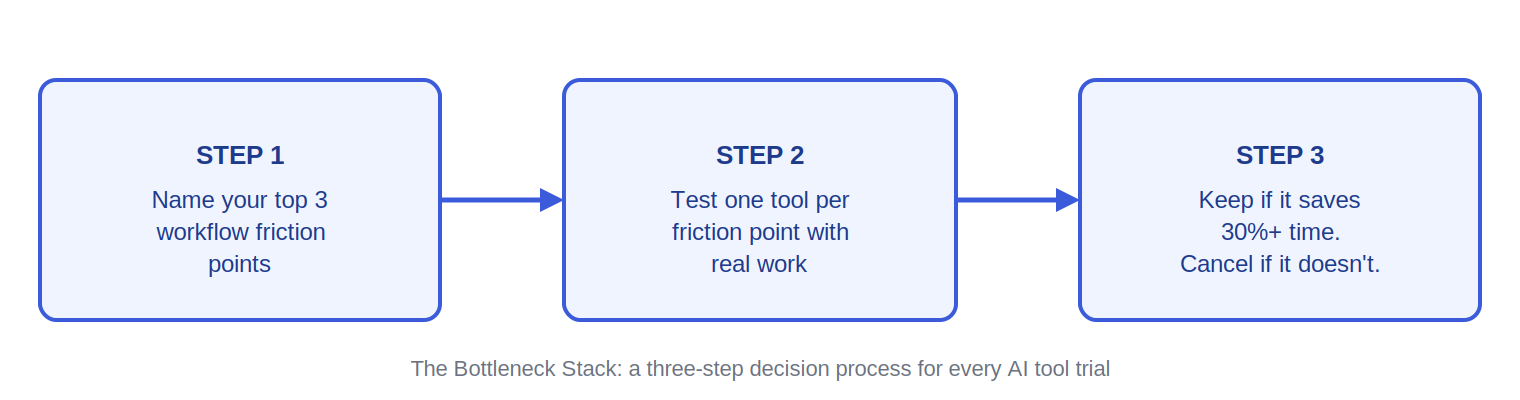

Bottleneck Stack: the practice of identifying your two to three highest-friction workflow moments before evaluating any AI tool, then building your stack exclusively around those moments rather than browsing by feature category.

The name matters because it forces a different starting point. You are not asking “what AI tools exist?” You are asking “what is slowing me down most, and is there a tool that removes exactly that friction?” Those are different questions. They lead to different stacks. They lead to much shorter stacks.

The AI Marketing Tools Worth Keeping, Organized by Bottleneck

This is not a catalogue. It is a short list with editorial judgment applied. For each tool: what specific friction it removes, who it makes sense for, one distinguishing detail you should know before subscribing, and one honest limitation. The “infrastructure” label means daily use, foundational to the workflow. “Situational” means genuinely useful but not a daily driver.

Not every tool here will belong in your stack. That is the point. The Bottleneck Stack approach means you only adopt the tools that map to friction you actually have.

Bottleneck 1: Research and Ideation Speed

If you spend more than 90 minutes per content piece on research, brief creation, and structural thinking before writing starts, this is your primary bottleneck.

Claude is what I use for almost everything in this category. It handles research synthesis, brief creation, angle development, competitor analysis, email drafting, and first-draft structuring in a single interface. The 200K+ token context window is the specific feature that separates it from most alternatives: you can paste an entire content brief, a competitor’s article, and your brand guidelines into one conversation and get output that is aware of all of it simultaneously. That matters for brief creation at the level where the output is actually usable. Free tier is functional. Claude Pro at $20 per month is worth it once you are using it daily. Infrastructure.

Honest limitation: Claude requires precise prompting to produce output that does not need heavy editing. If your prompts are vague, the output will be generic. The efficiency gains only materialize once you develop a set of prompts that are specific to your actual work.

Perplexity fills a gap Claude does not. When I need to verify what the web currently says about a topic, pull recent statistics, or track how a fast-moving space (like AI tools itself) is being covered right now, Perplexity returns cited sources alongside synthesized answers. Perplexity draws from live web sources rather than training data, which makes it genuinely different from asking Claude a research question. Free tier is sufficient for most research use cases. Situational for most, infrastructure for content-heavy roles.

Honest limitation: Synthesis quality degrades on niche or highly technical topics. Always click through to the cited sources before using a statistic in published content.

One note on the wrapper problem: most “AI research tools” and “AI brief generators” you will encounter in tool roundups are interfaces built on top of Claude, ChatGPT, or Gemini, priced at two to five times what it costs to access those models directly. Before subscribing to any dedicated research or brief creation tool, test whether Claude with a well-crafted prompt does the same job. It usually does.

Bottleneck 2: Content Production and SEO Quality

If your content takes longer to optimize for search than it takes to write, or if you are consistently producing content that does not rank despite solid effort, this is your primary bottleneck.

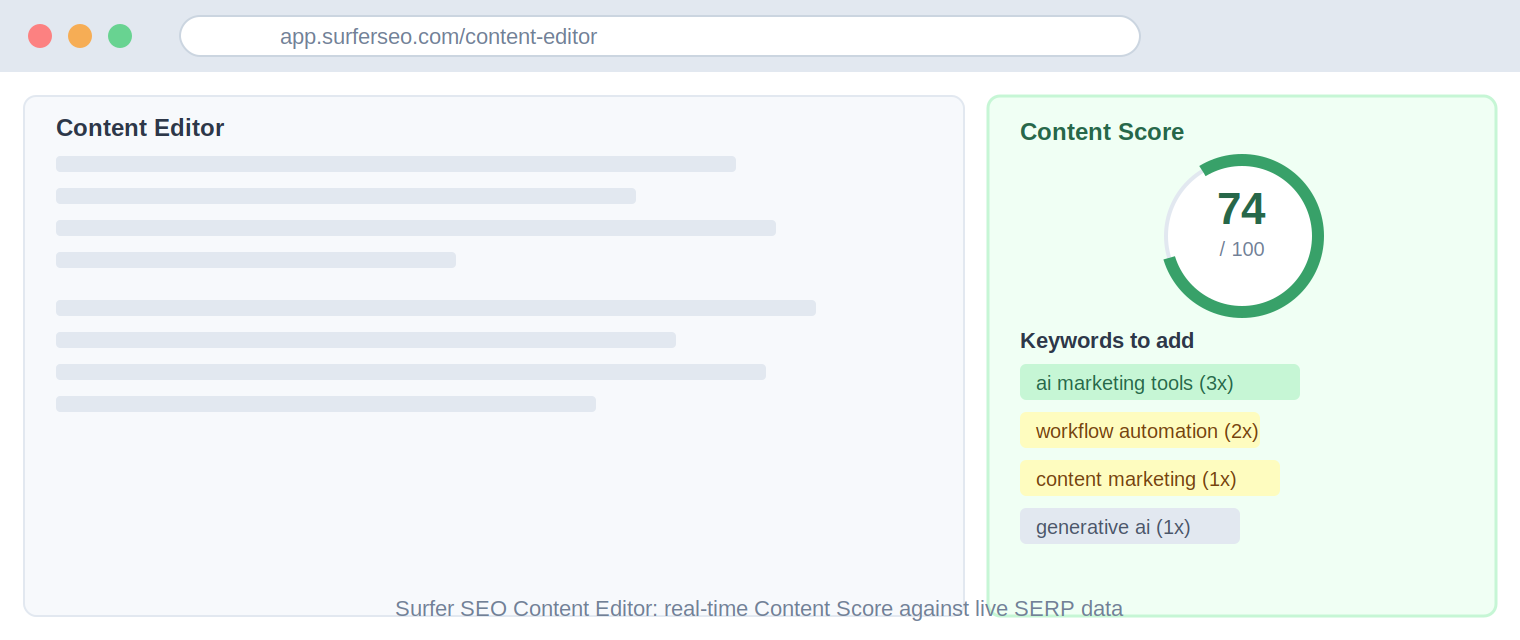

Surfer SEO is the one specialized tool in the content and SEO category that consistently earns its subscription for teams producing four or more articles per month. Surfer SEO scores your draft in real time against the top-ranking pages for your target keyword and tells you what to add, adjust, or cut to close the gap. The Content Score is the most widely referenced on-page optimization signal in SEO content workflows. For the KoinX content program (crypto tax, crowded and technically demanding category), Surfer’s content scoring was the fastest reliable way to align draft quality with what was actually ranking, without building a manual analysis every time. Infrastructure for SEO-focused content teams.

Honest limitation: Treating the Content Score as a target rather than a guide pushes writers toward keyword stuffing. The score measures correlation with what currently ranks. It does not tell you why those pages rank. Use it as a quality floor, not a ceiling.

Here is the comparison most people are actually looking for:

The short answer on whether Jasper is worth it versus using Claude directly: for most practitioners, it is not. Jasper’s core value proposition is brand voice consistency at team scale. If you are a solo practitioner or a small team, Claude with a well-structured brand voice prompt does the same job at a fraction of the cost. Where Jasper earns its subscription is in organizations where multiple writers need to produce on-brand content without one person reviewing everything. That is a specific context, not a universal recommendation.

For teams where content production is the primary bottleneck, the AI content marketing tools breakdown covers the specific platforms in more detail than this overview can. If SEO quality is the dominant friction, the AI SEO tools comparison goes deeper on content scoring, keyword clustering, and SERP matching platforms.

Bottleneck 3: Creative Production at Speed

If visual or video asset production is a meaningful part of your workflow and you are not a trained designer or video editor, this bottleneck applies to you. If your work is primarily text and strategy-focused, it does not. Do not subscribe to tools in this category because they seem useful in general. Subscribe only if creative asset production is a documented time cost in your week.

Canva AI is the right starting point for most non-designers. If you already pay for Canva, the AI features are bundled into your existing subscription. The image generation, background removal, and Magic Design features handle the majority of social media asset, presentation, and simple ad creative needs for a solo practitioner or small team. Infrastructure if you produce visual content regularly; free tier is genuinely sufficient for light use.

Honest limitation: Canva AI’s image generation does not compete with Midjourney for original, campaign-quality visuals. It is best for iteration and template-based production, not for generating the kind of original imagery you would use in a paid campaign.

Midjourney fills that gap. For original visual assets where stock photography is inadequate and a designer is not in the budget, Midjourney produces high-quality results at $10 per month for basic access. The learning curve is real (it runs through Discord, which is an unusual workflow), and prompt precision matters significantly. But once you have a set of prompts that produce on-brand results, the time-to-asset ratio is substantially better than briefing a designer for every image. Situational for most; infrastructure for teams producing original visual content at volume.

ElevenLabs and HeyGen belong in this section for teams producing audio or video content. ElevenLabs handles voice generation for narration, explainers, and audio-first content. HeyGen handles AI avatar-based video for teams that produce regular video but do not have a studio setup. Both are situational tools with genuine use cases. Neither belongs in your stack if you are not already producing regular audio or video content.

If you are at the start of this process and not ready to commit to paid subscriptions, the free AI marketing tools list covers what is genuinely useful at zero cost before you move to paid tiers.

What I Have Cancelled and What That Tells You

Published tool lists almost never show you the cancellations. The economics of affiliate-based content reviews reward recommending broadly and enthusiastically. A reviewer who cancels three tools after 60 days has no incentive to go back and update their article. So every tool on the list continues to appear as a legitimate option, regardless of how many people quietly let their subscriptions lapse.

The absence of negative signal creates a specific problem: false equivalence. When everything on the list appears equally valid, you have no way to distinguish a tool the reviewer uses daily from a tool they signed up for, wrote one paragraph about, and never opened again. That is not a hypothetical. It describes the majority of the tools on most major roundup pages.

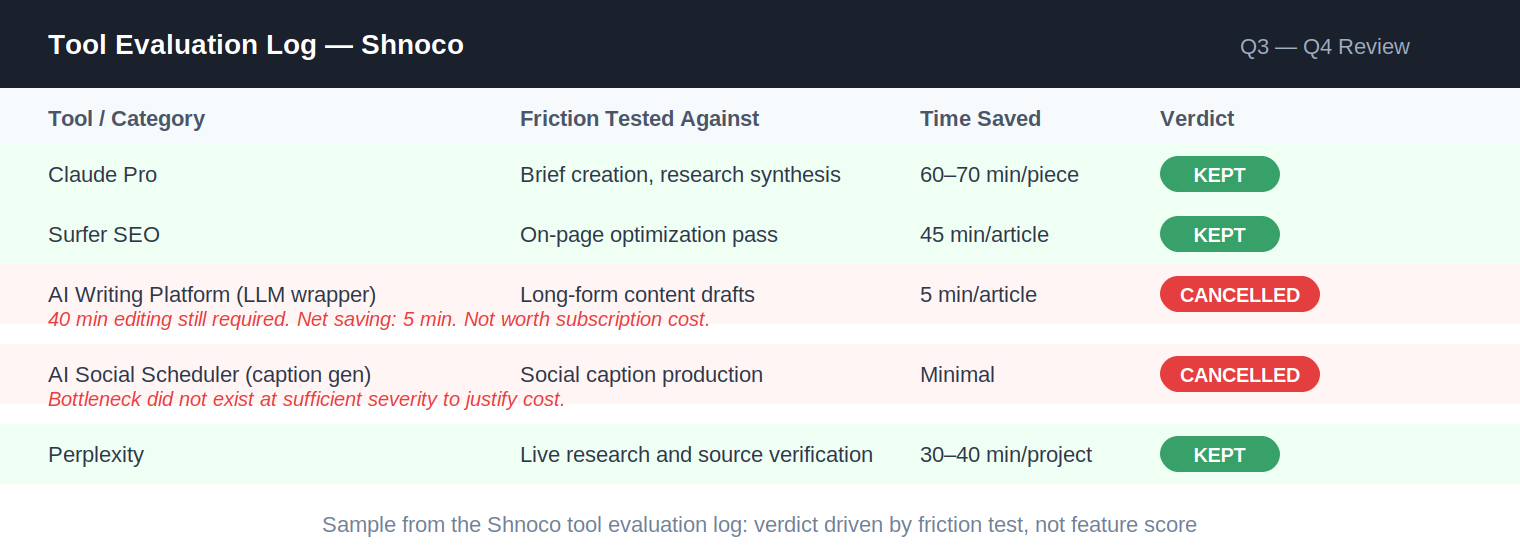

In practice, the tools that get cancelled fall into three categories:

- General-purpose LLM wrappers. These are tools whose primary value proposition is “AI-powered [task]” but whose actual mechanism is an interface built on Claude, ChatGPT, or Gemini, priced at two to five times the cost of accessing those models directly. The interface may be polished. The output is functionally identical to what you would get by prompting the underlying model yourself. I have cancelled multiple tools in this category, including dedicated AI copywriting platforms. Every time, the decision came after realizing the tool was adding cost without adding capability beyond what I already had.

- Workflow friction net-negative tools. These are tools where the setup, prompt crafting, and output editing required more total time than doing the task manually. I trialed one AI writing platform specifically for long-form content for a client project. The output was plausible but generic. Getting it to match the required voice and specificity took approximately 40 minutes of editing per piece. Writing from a solid brief took around 45 minutes. The tool was saving me five minutes per article while adding a subscription cost and a context-switching tax. Cancelled in week three of the trial.

- Solutions to problems I did not have at sufficient severity. These are tools that solve real marketing problems, just not problems that were slowing me down enough to justify the monthly fee. Social media scheduling tools with AI caption generation fall here. The AI features were functional. I was not producing social content at a volume where the time savings justified the cost over a simpler, cheaper scheduler. The tool was solving a bottleneck I did not have.

The test is not whether the tool is impressive. The test is whether it removes friction from your actual day.

I want to be direct about what the Shnoco tool evaluation process taught me. Evaluating 500+ tools across three years produced one finding that overrode all the others: the tools that became infrastructure were almost never the ones with the most features or the highest review scores. They were the ones that solved a problem I had every single week, in a way that was faster and more reliable than what I was doing before. That set is very small. It should be small for you too.

How to Build Your Bottleneck Stack: Five Steps

Start here, not with any tool list including this one.

- List the three workflow moments in your current marketing operation that cost the most time per week for the least predictable output. Write them down before you open any tool page. If you cannot name them, you are not ready to evaluate tools yet.

- For each moment, test whether Claude or ChatGPT handles it adequately with a well-crafted prompt. Run your real task, not a demo. If the output is usable after reasonable editing, you do not need a specialized tool for that bottleneck. No new subscription required.

- For the bottleneck where the general-purpose LLM falls short, identify one specialized tool and evaluate it specifically against that task using real work for five working days. Not a demo. Your actual deliverable.

- Run the friction test before renewing. Open the tool and complete a real task. Did it reduce the time cost of that specific task by at least 30%? Did the output hold up without significant manual correction? If both answers are yes, keep it. If either is no, cancel it. Do not let a sunk cost or the fear of missing out override a clear negative signal.

- Check for overlap before adding any second tool. If a new tool replicates functionality already available inside something you pay for, it does not fill a bottleneck. It creates bloat. The goal is the shortest stack that removes your highest-friction moments. That number is almost never more than five tools.

If you want help auditing your current marketing stack and identifying which tools are earning their subscription, the AI marketing tech stack guide walks through how to structure that review end to end.