You already know AI is changing marketing. What nobody writes well is the specific part: what it actually does at each funnel stage, what it cannot do, and which stage is worth tackling first. Every guide lists the same tools. Content AI at the top, chatbots in the middle, predictive scoring at the bottom. Few of them have run these applications against real campaigns and seen what moves the needle. I have, across retail, BFSI, and FMCG brands with real CRM data. Here is what AI actually transforms at every stage, and where the ROI is strongest.

At the Awareness Stage, AI’s Real Job Is Intelligence, Not Output

Before getting into what AI does here, a quick definition: an AI marketing funnel is a standard marketing funnel (awareness, consideration, conversion, retention) where AI tools are applied at each stage to improve targeting, personalisation, content relevance, and conversion outcomes. That is the consensus definition. What the consensus misses is which parts of that improvement are real and which are mostly noise.

Most TOFU AI investment goes to content generation. Teams use large language models to write blog posts faster, create social media variations at scale, and fill a content calendar with less manual effort. They measure the result in output units: posts per week, pieces per month. When leadership asks what AI has changed in marketing, the answer is a content volume number.

The problem is that content volume was never the thing limiting TOFU performance. Distribution quality, topic-to-audience fit, and relevance were. Adding AI-generated content to a channel that was already producing more than your audience was reading does not fix the underlying problem. It accelerates it. The result is lower average quality spread across more pieces, declining engagement per piece, and awareness metrics that look busier while producing fewer qualified entries into the funnel.

Research from Seer Interactive tracking informational queries through September 2025 found that organic click-through rates dropped 61% year-over-year for queries where an AI Overview is present. Adding more content to a channel where AI is increasingly answering the question before a click happens is the wrong response. The right response is getting better at identifying which topics will drive actual visits versus which ones will be absorbed by AI-generated answers before your page is ever seen.

During my SEO advisory work for KoinX, a crypto tax SaaS with over 1.5 million users, I found that the most useful AI application at the awareness stage was not faster content production. It was intent clustering: using AI to group search query patterns into audience mindsets that standard keyword tools do not surface. That changed which topics were worth pursuing, not how fast we published them. The topics we deprioritised as a result would have produced traffic. They would not have produced the right traffic. According to AI content marketing adoption data, production speed remains the dominant use case for AI in content marketing. The intelligence applications are a distant second in adoption. That imbalance is part of why so many TOFU programmes look busy and produce little.

The AI application most TOFU strategies invest in first, content generation, produces the least transformational impact at the awareness stage. The application they consistently underinvest in, audience intelligence and demand signal detection, is where actual transformation happens.

Use AI at the awareness stage primarily for intelligence: identifying emerging demand clusters before they appear in volume data, analysing which topics in your existing content actually drive qualified traffic versus volume traffic, and finding the gaps your audience is searching for that competitors are not serving well. Use it secondarily for production assistance, and only after the intelligence work has determined what is worth producing.

AI for Demand Signals Before Keywords Surface Them

Standard keyword research tools show you what people are already searching for at volume. By the time a topic shows meaningful search volume, most competitors in your category are looking at the same number and making the same content decision.

AI changes this by analysing patterns across a wider surface: search query clusters with low individual volume but high combined intent, social conversation trends that precede keyword adoption by weeks, and first-party engagement data showing which content your existing audience returns to before a topic breaks into mainstream search.

The output is a list of topics your audience is moving toward before the keyword data catches up. That is not a marginal improvement in content planning. It is a structural advantage in when you invest in a topic: early enough that your content is already ranking when the search volume arrives, rather than late enough that you are competing against established pages from day one.

The Consideration Stage Is Where AI Produces the Most Consistent, Measurable Lift

Once a prospect is in the consideration stage, the data picture changes. You have behaviour. You have engagement signals. You have something to model against.

Most teams think about AI at the consideration stage as chatbot deployment. A chat widget is installed, pointed at an FAQ document, and the MOFU AI work is considered addressed. A smaller number invest in email personalisation through an existing automation platform and classify it as AI because the platform added the word to its marketing materials.

A chatbot that surfaces FAQ answers is a customer service efficiency tool. It is not a consideration-stage transformation. The transformation from AI at this stage is about using behavioural data to predict which leads are moving toward a decision, what will accelerate that movement, and how to deliver different signals to different audiences in real time based on where they are in the evaluation process.

Working with BFSI clients including Axis Bank and Bajaj Finserv, I found that the highest-value consideration stage intervention was not the channel or the message. It was the timing model. The customer who just received a salary credit and the customer who just had a transaction declined are the same demographic profile. They are completely different consideration moments. AI-powered event-triggered communications built around lifecycle signals consistently outperformed batch campaign schedules by margins that justified the data infrastructure required to build them. The ROI gap between signal-triggered outreach and standard campaign-based communications is visible in AI marketing performance data across industries. The gap is not small, and it widens as the volume of signals available to the model increases.

Research from Deloitte Insights (2024) found that companies using AI for lead scoring and targeting experienced a 20 to 30% rise in conversion rates, with organisations also reporting 10 to 20% revenue growth in the first year of implementation.

At the consideration stage, AI investment belongs first in the signal layer, not the channel layer.

Signal Layer: the integrated data infrastructure that makes behavioural signals from multiple touchpoints available in real time to trigger differentiated AI-powered journeys.

Once the signal layer exists, the channel (email, chat, ad retargeting) becomes the output of an intelligence system rather than the intelligence system itself. Building the channel first, as most teams do, is like building a distribution network without deciding what to distribute.

Predictive Lead Scoring vs Traditional Lead Scoring

The difference between these two approaches matters more than most descriptions of it suggest.

Traditional lead scoring works like this: marketing and sales sit together, agree that certain actions are worth certain point values, and assign scores manually. A whitepaper download is worth 10 points. A pricing page visit is worth 20. A score above 50 gets routed to sales. This system is easy to build, easy to explain, and based almost entirely on intuition rather than evidence.

The problem: someone who downloads a whitepaper and never returns is scored identically to someone who downloads the same whitepaper and comes back four times in the following week. The model does not know the difference between engagement that precedes conversion and engagement that does not.

AI-powered predictive scoring works differently:

- The model is trained on your historical conversion data, identifying which combinations of behaviours actually preceded closed deals or purchases in the past, not which behaviours marketing assumed were predictive.

- It surfaces patterns no human-assigned weighting would find: specific sequences of content types, time gaps between sessions, combinations of pages visited that correlate with eventual conversion.

- It updates continuously as new conversion data comes in, rather than remaining static until someone schedules a scoring review meeting.

- It accounts for negative signals as well as positive ones, identifying disengagement patterns that traditional scoring ignores entirely.

Traditional scoring tells sales who looks engaged. Predictive scoring tells sales who is likely to convert, based on evidence from your specific customer base. According to Forrester research on AI-driven lead scoring, companies using AI-powered lead scoring see 20% higher conversion rates and 15% faster sales cycles on average compared to manual scoring methods.

AI Is Restructuring the Conversion Stage Before Most Funnels Are Ready for It

The consideration stage is where AI helps identify which prospects are moving toward a decision. At the conversion stage, something more structural is happening.

Most AI investment at BOFU goes to conversion rate optimisation: AI-assisted A/B testing, dynamic landing pages, personalised CTAs, automated bid management in paid search. These are real improvements. Most teams deploying AI here are seeing genuine lift. The issue is not that this work is wrong. The issue is that it assumes all buyers are still arriving at your conversion assets the same way.

Adobe Analytics data from March 2025 shows that traffic from generative AI sources to U.S. retail sites grew 1,200% between July 2024 and February 2025, doubling every two months. Adobe’s longitudinal tracking of this traffic shows that in July 2024, AI-referred visits were 43% less likely to convert than standard traffic. By February 2025, that gap had closed to just 9%.

What this means: a segment of your buyers is arriving at your conversion assets having already evaluated options, compared pricing, and made a preliminary decision inside an AI-powered conversation. They do not need to be persuaded. They need to be confirmed.

From my own work in retail analytics, the highest-converting customer segment in loyalty databases was rarely the one that went through the standard research-and-compare path. It was the one that arrived through a triggered recommendation from a trusted channel, pre-sold, needing only a frictionless path to complete the action. AI-mediated discovery is producing this pattern at scale. The implication is not that conversion optimisation is irrelevant. It is that a growing share of buyers arriving at BOFU no longer need the persuasion work that conversion pages are built to do.

According to funnel optimization conversion benchmarks, drop-off rates at the conversion stage remain high across categories. A significant portion of that drop-off reflects friction built for a buyer who needed convincing, applied to a buyer who had already decided.

Optimise your conversion assets for two types of buyer. The first is the traditional buyer who followed a multi-touchpoint path and needs your landing page to do persuasion work: clear value proposition, objection handling, social proof, a reason to act now. The second is the AI-referred buyer who arrives pre-evaluated and needs frictionless confirmation rather than persuasion: shorter forms, immediate pricing visibility, a clear next step, no barrier between decision and action.

Structuring Your Conversion Assets for AI-Referred Traffic

The practical changes are specific. Here is what shifts depending on which buyer type you are optimising for:

The structural data AI systems use to route buyers also matters. Product pages with schema markup, clear pricing, and structured feature lists are easier for AI systems to extract and cite accurately. If your product information is buried in design-heavy pages that require a human to interpret, AI systems will either skip you in their synthesis or misrepresent what you offer.

Read next: real-world AI marketing examples showing how these stage-specific applications play out across different brand contexts.

Retention Is the Highest-ROI AI Application in the Funnel. It Gets the Least Coverage.

Every AI marketing funnel article covers retention last and briefly. This one will not. For most established brands with an existing customer base, the retention stage is where AI delivers the fastest, most measurable return. The coverage gap does not reflect the ROI reality.

Most teams frame retention AI as a single implementation: build a churn prediction model, identify at-risk customers, send a save sequence, done. This framing makes it sound like a project with a completion date. In practice, most teams never reach it because the data infrastructure required feels out of reach, or because the attribution for retention improvements is harder to present to leadership than acquisition numbers. A new customer produces a clear line from campaign to CRM record. A customer who almost left but did not shows up in nothing.

The cost asymmetry between acquisition and retention is not a new observation. Acquiring a new customer costs between five and seven times more than retaining an existing one. AI does not change this ratio. It changes what you can do on the retention side. Specifically, AI lets you act on churn signals before the customer has consciously decided to leave, rather than after they have stopped engaging. According to customer churn benchmarks by industry, lapse rates in most categories are consistent enough to be modelled with high accuracy. What changes with AI is not the lapse rate itself but whether you intervene before or after the pattern is complete.

Here is what this looks like in practice.

A few years back I was working with one of the largest department store chains in India, managing their ClubWest loyalty programme. The programme had 2.7 million members and drove the majority of the brand’s annual revenue. Solid programme on paper. But every year, around 15% of members lapsed.

When we dug into why the re-engagement campaigns were not working, we found something uncomfortable. Seventy-three percent of recent lapsers were on DND. Sixty-three percent had not opened a single email in months. Our entire CRM stack was essentially talking to itself. The standard channels had already failed before the re-engagement workflow even triggered. The customers who were going to lapse were unreachable through the tools designed to reach them.

The fix was not a better email. It was a different channel. We built a Facebook Custom Audience from the lapsed member base and ran re-engagement directly in their social feed. No DND restriction. No email open rate to pray over. We met them where they still were. The campaign worked. It won a CMO Asia Award for Best Use of Facebook.

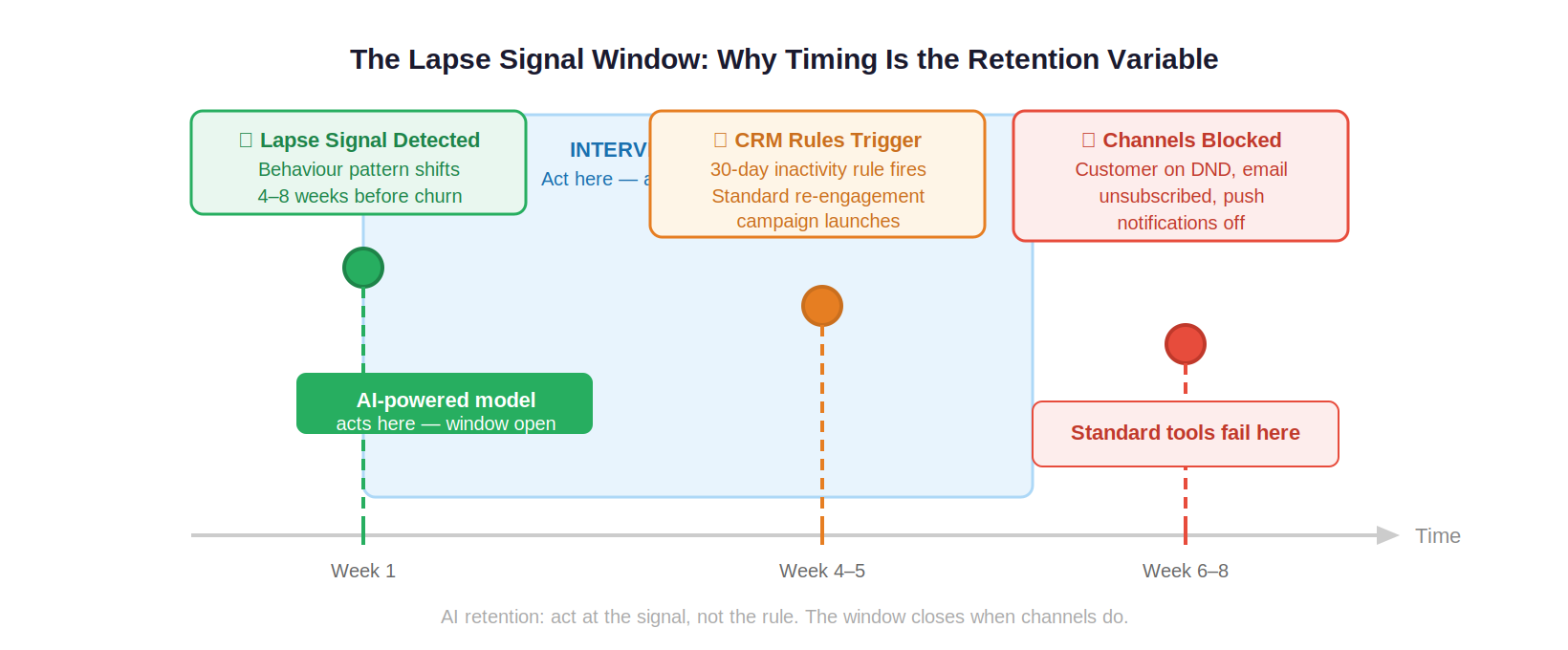

The lesson that applies directly to AI-powered retention: the lapse signal appears in behavioural data weeks before the customer hits DND status. That is the window. Act inside it and you have every channel available. Wait until the standard trigger fires and you may have already lost access to the customer entirely.

Forrester’s 2025 Customer Experience Index found that companies that operationalize predictive customer intelligence reduce churn by 15% to 25% compared to those relying on reactive retention programmes. The difference is not the campaign. It is when the campaign fires.

If you have an existing customer base and you are not using AI on retention before investing in TOFU, you are optimising the wrong end of the funnel. The starting point is a Lapse Signal model.

Lapse Signal: the specific behavioural pattern in your customer data that precedes disengagement, typically appearing four to eight weeks before the customer would be classified as churned by your standard inactivity rules.

Build this on your existing transaction and engagement data. The model identifies which combination of behavioural patterns preceded lapse in your historical data and triggers an intervention at the signal rather than at the calendar-based rule. This does not require an enterprise MarTech stack. It requires clean transaction data, a model trained on your historical lapse events, and an automation layer that fires at the signal.

AI Churn Prediction vs Rules-Based Retention

Most retention workflows are rules-based. If a customer has not logged in for 30 days, send a re-engagement email. If they have not purchased in 90 days, send a win-back offer. These rules are easy to build, easy to explain to stakeholders, and consistently late.

They are late because the 30-day inactivity threshold is not when the decision to disengage happened. It is when the brand noticed. The decision happened earlier. The data showed it earlier.

AI-powered churn prediction changes the detection point. The model analyses the combination of signals that preceded lapse in your historical customer records: declining session frequency, shorter engagement duration, reduced content interaction depth, a shift away from high-intent pages. It identifies customers showing early versions of these patterns weeks before the standard inactivity threshold would trigger.

The output is not a different campaign. It is an earlier one, delivered when the customer is still engaged enough to respond. The channel is still email or SMS or push notification. The difference is timing and targeting precision. A re-engagement message sent to a customer who is still opening emails converts at a fundamentally different rate from the same message sent to a customer who stopped opening emails three weeks ago.

Where to Start

The question this article opened with was: which stage is worth tackling first? Here is the answer in five specific steps.

Step 1: Audit the quality of your existing customer data before touching any AI application.

Before any AI tool produces useful output, the input data needs to be reliable. Clean transaction records, consistent event tracking, and a usable engagement history are the prerequisite for every application in this article. An AI churn model trained on incomplete data is worse than no model.

Step 2: If you have an existing customer base, run a lapse rate analysis before investing in TOFU.

Identify what percentage of customers disengage each month, when the behavioural pattern preceding lapse first appears in your data, and which channels are still reachable at that point. This audit takes two to three weeks and tells you whether the retention AI opportunity is large enough to prioritise before awareness.

Step 3: Map your consideration stage signals.

List every distinct behavioural signal you currently capture from prospects during the evaluation phase: page visits, content downloads, email engagement depth, product page time, return visit frequency. If you have fewer than five distinct, trackable signals, you do not yet have a signal layer. The AI consideration tools, lead scoring and personalised trigger sequences, will not perform well without one.

Step 4: Evaluate your TOFU AI investment against one specific question.

Are you using AI to determine what to produce, or only to produce it faster? If the honest answer is only production speed, redirect at least a third of that investment toward audience intelligence work: intent clustering, demand signal analysis, and topic-to-conversion mapping on your existing content.

Step 5: Check your conversion asset structure for AI-referred traffic.

Is your pricing publicly accessible? Is your product information structured so that AI systems can extract and cite it accurately? These changes cost less than a landing page redesign and have an immediate impact on the conversion rate of AI-referred visitors who arrive pre-evaluated and need confirmation rather than persuasion.

If you want help auditing your funnel for AI readiness across these stages, reach me at shankar@shno.co.